SPECsfs2008_nfs.v3 Result

|

SGI

|

:

|

SGI NAS (32TB-4U-P)

|

|

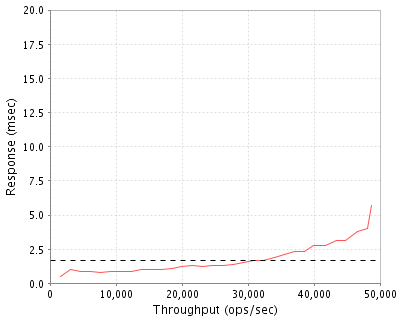

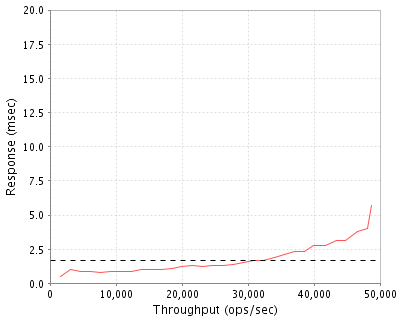

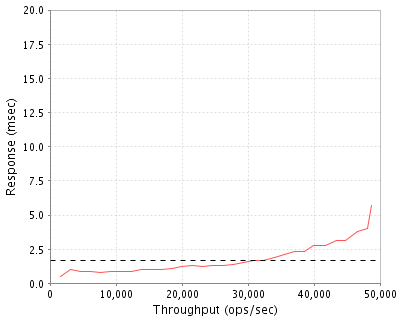

SPECsfs2008_nfs.v3

|

=

|

48770 Ops/Sec (Overall Response Time = 1.66 msec)

|

Performance

Throughput

(ops/sec)

|

Response

(msec)

|

|

1536

|

0.5

|

|

3073

|

1.0

|

|

4610

|

0.9

|

|

6150

|

0.9

|

|

7689

|

0.8

|

|

9225

|

0.9

|

|

10766

|

0.9

|

|

12310

|

0.9

|

|

13822

|

1.0

|

|

15437

|

1.0

|

|

16886

|

1.0

|

|

18523

|

1.1

|

|

19956

|

1.2

|

|

21564

|

1.3

|

|

23118

|

1.2

|

|

24588

|

1.3

|

|

26224

|

1.3

|

|

27735

|

1.4

|

|

29292

|

1.5

|

|

30839

|

1.7

|

|

32384

|

1.7

|

|

33956

|

1.9

|

|

35531

|

2.1

|

|

37075

|

2.3

|

|

38619

|

2.3

|

|

39965

|

2.8

|

|

41678

|

2.8

|

|

43425

|

3.1

|

|

44759

|

3.1

|

|

46568

|

3.8

|

|

48155

|

4.0

|

|

48770

|

5.7

|

|

|

Product and Test Information

|

Tested By

|

SGI

|

|

Product Name

|

SGI NAS (32TB-4U-P)

|

|

Hardware Available

|

July, 2012

|

|

Software Available

|

September, 2012

|

|

Date Tested

|

September, 2012

|

|

SFS License Number

|

4

|

|

Licensee Locations

|

Fremont, CA

|

SGI NAS is a unified storage management platform that delivers enterprise-class features and runs on industry standard hardware. SGI NAS helps organizations implement high performance, yet cost-effective data storage solutions by taking advantage of features such as inline deduplication, unlimited snapshots and cloning, hardware-agnostic solutions, and high availability support. All enterprise features, such as thin provisioning, storage snapshots, cloning, and replication, are built in and implemented without any additional license or costs. In contrast, with a traditional appliance model, it is required to do a controller upgrade if performance, or the number of spindles, reach a certain level.

Configuration Bill of Materials

|

Item No

|

Qty

|

Type

|

Vendor

|

Model/Name

|

Description

|

|

1

|

1

|

Base Server

|

SGI

|

S9D-NX-CS-SRVR-4U

|

SGI MIS Server enclosure for one mbd system.

72 3.5 inch drive slots. Single power enclosure and two PSUs.

Universal rail kit for any rack type.

|

|

2

|

2

|

Processor

|

SGI

|

S9D-NX-E5-2620

|

Intel Xeon E5-2620 95W 2Ghz., SGI NAS

|

|

3

|

1

|

Motherboard

|

SGI

|

S9D-S2600-JF1

|

Server motherboard unpopulated for single mbd system. Includes required cables

|

|

4

|

1

|

Component

|

SGI

|

S9D-RISER-1

|

Motherboard riser for Modular InfiniteStorage Server.

|

|

5

|

1

|

Compnonent

|

SGI

|

S9D-RISER-3S

|

Motherboard riser for Modular InfiniteStorage Server.

|

|

6

|

1

|

Boot Mirror Set

|

SGI

|

S9D-SA325-300-MCIN

|

Two boot drives and carriers SAS 6G 300 GB 10K 2.5

|

|

7

|

64

|

Disk Drive

|

SGI

|

DD-SAS35-500G-72-S

|

HDD SAS 6Gb/s 500 GB 7.2K RPM 3.5

|

|

8

|

2

|

SSD

|

SGI

|

S9D-NX-S2-8G-ST

|

RAM 8GB SAS 6G 3.5 , SGI NAS

|

|

9

|

4

|

SSD

|

SGI

|

S9D-NX-S2-200G-ST

|

6G SAS 200GB 2.5 SGI NAS

|

|

10

|

66

|

Drive Carrier

|

SGI

|

S9D-CARRIER-35

|

3.5" Drive carrier for MIS platform

|

|

11

|

4

|

Drive Carrier

|

SGI

|

S9D-CARRIER-25-15

|

2.5" 15mm Drive carrier for MIS platform

|

|

12

|

16

|

Memory DIMM

|

SGI

|

S9D-MEM-8G

|

1600 speed LSX MEM 8G

|

|

13

|

2

|

HBA

|

SGI

|

S9D-SAS-9211-8I

|

6Gb SAS HBA with 2 internal x4 SFF8087 miniSAS connectors

|

|

14

|

2

|

HBA

|

SGI

|

S9D-10GENET-OR-2P

|

Network connectivity 10GbE optical, 2 ports.

|

|

15

|

1

|

Software

|

SGI

|

SDL-NAS-3

|

SGI NAS SOFTWARE 3.X

|

|

16

|

1

|

License

|

SGI

|

SR5-S9D-NAS-38TB

|

Base license Manage up to 38TB of storage

|

Server Software

|

OS Name and Version

|

Solaris 5.11

|

|

Other Software

|

SGI NAS SOFTWARE 3.1.3.5

|

|

Filesystem Software

|

ZFS

|

Server Tuning

|

Name

|

Value

|

Description

|

|

atime

|

off

|

Disable atime updates (all filesystems)

|

|

zfs:zfs_no_write_throttle

|

1

|

Do not enforce write throttle

|

|

nfs:nfs_allow_preepoch_time

|

1

|

Allow time stamp values to be passed through unchecked.

|

|

/kernel/drv/scsi_vhci.conf:load-balance

|

logical-block

|

Load balance I/O across paths to disk in logical blocks.

|

Server Tuning Notes

None.

Disks and Filesystems

|

Description

|

Number of Disks

|

Usable Size

|

|

SAS 6G 300 GB 10K 2.5

|

2

|

300.0 GB

|

|

SSD/RAM 8GB SAS 6G 3.5, SGI NAS

|

2

|

8.0 GB

|

|

HDD SAS 6Gb/s 500 GB 7.2K RPM 3.5

|

64

|

15.6 TB

|

|

SSD 6G SAS 200GB 2.5, SGI NAS

|

4

|

800.0 GB

|

|

Total

|

72

|

16.7 TB

|

|

Number of Filesystems

|

4

|

|

Total Exported Capacity

|

16000 GB

|

|

Filesystem Type

|

ZFS

|

|

Filesystem Creation Options

|

Select "Performance Optimized" in the administative GUI to create one mirrored zpool with 32 vdevs of 2 disks each, 4xL2ARC, and 2xSLOG. Then create 4 folders with atime=off and NFS export the folders.

|

|

Filesystem Config

|

ZFS stripes the storage pool across all the vdevs/mirror sets. The exported folders share all the space in the storage pool.

|

|

Fileset Size

|

5760.9 GB

|

Factory preset configuration "Performance Optimized". The storage configuration includes 64 disk drives, 2 write flash devices, and 4 read flash accelerators. A single pool is created by mirroring pairs of disks, then striping across all the mirror sets. The write flash accelerator is used for the ZFS Intent Log (ZIL) and the read flash accelerator is used as a Level 2 Adaptive Replacement Cache (L2ARC) for the pool. The pool is configured with 4 file systems.

Network Configuration

|

Item No

|

Network Type

|

Number of Ports Used

|

Notes

|

|

1

|

10 Gigabit Ethernet (Optical)

|

4

|

Each port used as an independent subnet, not bonded.

|

Network Configuration Notes

All ports connect to one 10 GbE switch.

Benchmark Network

Simple star topology around a Blade Network Technologies RackSwitch G8100 24-port 10 GbE switch.

Processing Elements

|

Item No

|

Qty

|

Type

|

Description

|

Processing Function

|

|

1

|

2

|

CPU

|

Intel Xeon E5-2620 95W 2Ghz. SGI PN 097 0500 001

|

TCP/IP, NFS protocol, ZFS file system, Operating System

|

Processing Element Notes

None.

Memory

|

Description

|

Size in GB

|

Number of Instances

|

Total GB

|

Nonvolatile

|

|

Main memory

|

128

|

1

|

128

|

V

|

|

Grand Total Memory Gigabytes

|

|

|

128

|

|

Memory Notes

The SGI NAS main memory is used for the Adaptive Replacement Cache (ARC), the data cache, and operating system memory.

Stable Storage

NFS stable write and commit operations are not acknowledged until after the data has been written to the ZIL or to disk.

System Under Test Configuration Notes

Other System Notes

Adaptive Replacement Cache is the algorithm used to manage the filesytem cache in ZFS.

Test Environment Bill of Materials

|

Item No

|

Qty

|

Vendor

|

Model/Name

|

Description

|

|

1

|

8

|

SGI

|

C2112-4TY14

|

4 nodes in each of 2 2U chassis

|

|

2

|

1

|

Blade Network Technologies

|

G8100

|

24-port 10 Gigabit Ethernet switch

|

Load Generators

|

LG Type Name

|

LG1

|

|

BOM Item #

|

1

|

|

Processor Name

|

LSX-CPU-E5620 Intel Xeon E5620 processor

|

|

Processor Speed

|

2.4 GHz

|

|

Number of Processors (chips)

|

2

|

|

Number of Cores/Chip

|

4

|

|

Memory Size

|

12 GB

|

|

Operating System

|

SLES 11 SP 1

|

|

Network Type

|

1 port 10 GbE

|

Load Generator (LG) Configuration

Benchmark Parameters

|

Network Attached Storage Type

|

NFS V3

|

|

Number of Load Generators

|

8

|

|

Number of Processes per LG

|

32

|

|

Biod Max Read Setting

|

2

|

|

Biod Max Write Setting

|

2

|

|

Block Size

|

AUTO

|

Testbed Configuration

|

LG No

|

LG Type

|

Network

|

Target Filesystems

|

Notes

|

|

1..2

|

LG1

|

N1

|

/volumes/tank0/A,...,/volumes/tank0/D

|

All clients mount all exported folders.

|

|

3..4

|

LG1

|

N2

|

/volumes/tank0/A,...,/volumes/tank0/D

|

All clients mount all exported folders.

|

|

5..6

|

LG1

|

N3

|

/volumes/tank0/A,...,/volumes/tank0/D

|

All clients mount all exported folders.

|

|

7..8

|

LG1

|

N4

|

/volumes/tank0/A,...,/volumes/tank0/D

|

All clients mount all exported folders.

|

Load Generator Configuration Notes

sysctl variables set in /etc/syctl.conf:

-

kernel.randomize_va_space=0

-

net.core.optmem_max=524287

-

net.core.rmem_default=524287

-

net.core.rmem_max = 2097152

-

net.core.wmem_default=524287

-

net.core.wmem_max = 2097152

-

net.core.netdev_max_backlog=300000

-

net.ipv4.tcp_timestamps=1

-

net.ipv4.tcp_sack=1

-

net.ipv4.tcp_tw_recycle=0

-

net.ipv4.tcp_tw_reuse=0

-

net.ipv4.neigh.default.gc_thresh1=4096

-

net.ipv4.neigh.default.gc_thresh2=8192

-

net.ipv4.neigh.default.gc_thresh3=16384

-

vm.dirty_background_ratio=10

-

vm.dirty_writeback_centisecs=50

-

net.ipv4.tcp_rmem=8192 262144 524288

-

net.ipv4.tcp_wmem=8192 262144 524288

-

net.ipv4.tcp_mem=192480 256640 384960

-

kernel.sysrq = 1

Uniform Access Rule Compliance

All clients access all exported folders. All exported folders utilize all available storage.

Other Notes

Config Diagrams

Generated on Mon Feb 25 11:18:51 2013 by SPECsfs2008 HTML Formatter

Copyright © 1997-2008 Standard Performance Evaluation Corporation

First published at SPEC.org on 22-Feb-2013