SPECsfs2008_nfs.v3 Result

|

Hitachi Data Systems

|

:

|

Hitachi Unified Storage File, Model 4060, Dual Node Cluster

|

|

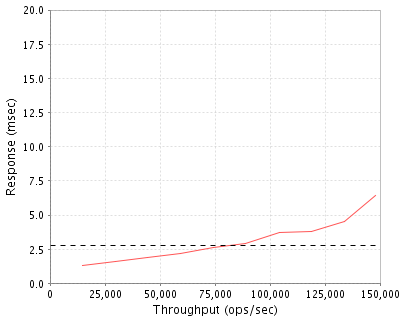

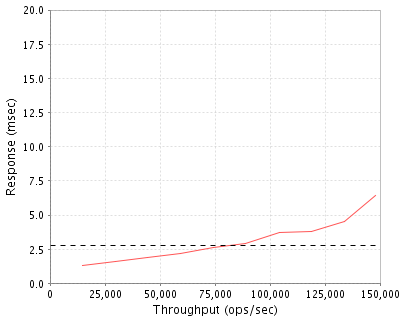

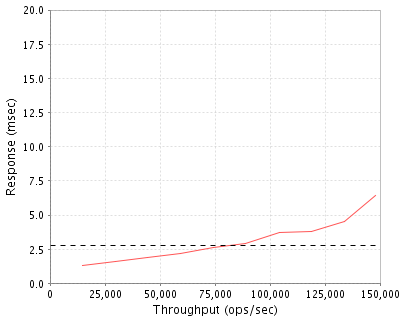

SPECsfs2008_nfs.v3

|

=

|

147957 Ops/Sec (Overall Response Time = 2.76 msec)

|

Performance

Throughput

(ops/sec)

|

Response

(msec)

|

|

14807

|

1.3

|

|

29634

|

1.6

|

|

44487

|

1.9

|

|

59377

|

2.2

|

|

74272

|

2.6

|

|

89086

|

2.9

|

|

104183

|

3.7

|

|

118916

|

3.8

|

|

133903

|

4.5

|

|

147957

|

6.4

|

|

|

Product and Test Information

|

Tested By

|

Hitachi Data Systems

|

|

Product Name

|

Hitachi Unified Storage File, Model 4060, Dual Node Cluster

|

|

Hardware Available

|

July 2013

|

|

Software Available

|

July 2013

|

|

Date Tested

|

June 2013

|

|

SFS License Number

|

276

|

|

Licensee Locations

|

Santa Clara, CA, USA

|

The Hitachi Unified Storage (HUS) and Hitachi NAS (HNAS) Platform family of products provide multiprotocol support to store and share block, file and object data types. The 4000 series delivers best-in-class performance, scalability, clustering with automated failover, 99.999% availability, non-disruptive upgrades, smart primary deduplication, intelligent file tiering, automated migration, 256TB file system pools, a single namespace up to the maximum usable capacity and are integrated with the Hitachi Command suite of management and data protection software. The HUS file module uses a hardware-accelerated "Hybrid Core" architecture that accelerates network and file protocol processing to achieve the industry's best performance in terms of both throughput and Operations per second. The HUS uses an object-based file system (SiliconFS) and virtualization to deliver the highest scalability in the market, enabling organizations to consolidate file servers and other NAS devices into fewer nodes and storage arrays for simplified management, improved space efficiency and lower energy consumption. Each 4060 node can scale up 8PB of usable data storage; support 10GbE LAN access and 8Gbps FC storage connectivity.

Configuration Bill of Materials

|

Item No

|

Qty

|

Type

|

Vendor

|

Model/Name

|

Description

|

|

1

|

2

|

Server

|

HDS

|

SX345371.P

|

Hitachi NAS 4060 Base System

|

|

2

|

1

|

Server

|

HDS

|

SX345278.P

|

System Management Unit (SMU)

|

|

3

|

2

|

Software

|

HDS

|

SX365131.P

|

Hitachi NAS SW - Software Bundle

|

|

4

|

8

|

FC Interface

|

HDS

|

FTLF8528P3BNV.P

|

SFP+ 8Gbps FC

|

|

5

|

12

|

Network Interface

|

HDS

|

FTLX8571D3BCV.P

|

SFP+ 10GE

|

|

6

|

1

|

Storage

|

HDS

|

HUS-SOLUTION.S

|

Hitachi Unified Storage System

|

|

7

|

1

|

Storage

|

HDS

|

HUS150-A0001.S

|

HUS 150 Rack Mount System

|

|

8

|

1

|

Disk Controller

|

HDS

|

HDF850-CBL.P

|

HUS 150 Base Controller Box

|

|

9

|

2

|

Disk Controller

|

HDS

|

DF-F850-CTLL.P

|

HUS 150 Controller

|

|

10

|

4

|

Cache

|

HDS

|

DF-F850-8GB.P

|

HUS 150 8GB Cache Module

|

|

11

|

300

|

Disk Drives

|

HDS

|

DF-F850-9HGSS.P

|

HUS 900GB SAS 10K RPM HDD SFF for CBSS/DBS-Base

|

|

12

|

13

|

Chassis

|

HDS

|

DF-F850-DBS.P

|

HUS Drive Box - SFF 2U x 24

|

|

13

|

4

|

FC Interface

|

HDS

|

DF-F850-HF8G.P

|

HUS 150 4x8Gbps FC Interface Adapter

|

|

14

|

1

|

Rack

|

HDS

|

A3BF-AMS-US.P

|

AMS 19 in rack Americas MIN

|

|

15

|

1

|

Software

|

HDS

|

044-230199-03.P

|

HUS 150 Base Operating System M License

|

|

16

|

2

|

Switch

|

Brocade

|

HD-5320-0008.P

|

Brocade 5320 switch w/48 active ports,48 SWL 8Gb BR SFPs

|

Server Software

|

OS Name and Version

|

11.2.3319.04

|

|

Other Software

|

None

|

|

Filesystem Software

|

SiliconFS 11.2.3319.04

|

Server Tuning

|

Name

|

Value

|

Description

|

|

security-mode

|

UNIX

|

Security mode is native UNIX

|

|

cifs_auth

|

off

|

Disable CIFS security authorization

|

|

cache-bias

|

small-files

|

Set metadata cache bias to small files

|

|

fs-accessed-time

|

off

|

Accessed time management was turned off

|

|

shortname

|

off

|

Disable short name generation for CIFS clients

|

|

read-ahead

|

0

|

Disable file read-ahead

|

Server Tuning Notes

None

Disks and Filesystems

|

Description

|

Number of Disks

|

Usable Size

|

|

900GB SAS 10K RPM Disks

|

300

|

192.1 TB

|

|

250GB SATA Disks. These four drives (two mirrored drives per node) are used for storing the core operating system and management logs. No cache or data storage.

|

4

|

500.0 GB

|

|

Total

|

304

|

192.6 TB

|

|

Number of Filesystems

|

4

|

|

Total Exported Capacity

|

192 TB

|

|

Filesystem Type

|

WFS-2

|

|

Filesystem Creation Options

|

4K filesystem block size

|

|

Filesystem Config

|

Each Filesystem was striped across 15 x 4D+1P RAID-5 LUNs (75 HDDs).

|

|

Fileset Size

|

17330.9 GB

|

The storage configuration consisted of one Hitachi Unified 150 storage system (HUS150) configured with dual controllers and 32GB cache memory. There were 300 10K RPM SAS disks in use for these tests. There were 60 LUNs created using RAID-5, 4D+1P. There were eight 8Gbps FC ports in use across two controllers. The FC ports were connected to the 4060 nodes via a redundant pair of Brocade 5320 switches. The 4060 nodes were connected to Brocade 5320 switches via four 8Gbps FC connections, such that a completely redundant path exists from each node to the storage. Hitachi Unified Storage file module nodes have two internal mirrored hard disk drives which are used to store the core operating software and system logs. These drives are not used for cache space or for storing data.

Network Configuration

|

Item No

|

Network Type

|

Number of Ports Used

|

Notes

|

|

1

|

10 Gigabit Ethernet

|

4

|

Integrated 10GbE Ethernet controller

|

Network Configuration Notes

Two 10GbE network interfaces from each 4060 node were connected to a Brocade TurboIron 24X switch, which provided network connectivity to the clients. The interfaces were configured to use Jumbo frames.

Benchmark Network

Each LG has an Intel XF SR 10GbE single port PCIe network interface. Each LG connects via a single 10GbE connection to the ports on the Brocade TurboIron 24X network switch.

Processing Elements

|

Item No

|

Qty

|

Type

|

Description

|

Processing Function

|

|

1

|

2

|

FPGA

|

Altera Stratix IV EP4SE530

|

Filesystem

|

|

2

|

4

|

FPGA

|

Altera Stratix IV EP4SGX360

|

Network Interface, NFS

|

|

3

|

2

|

FPGA

|

Altera Stratix IV EP4SGX290

|

Storage Interface

|

|

4

|

2

|

CPU

|

Intel Xeon Quad-Core CPU

|

Management

|

|

5

|

2

|

CPU

|

Intel Xeon Dual-Core CPU

|

HUS150 host I/O management

|

|

6

|

2

|

ASIC

|

Hitachi Custom ASIC

|

HUS150 I/O data engine

|

Processing Element Notes

Each 4060 node has 4 FPGAs that are used for processing functions. Each HUS150 storage system controller has an Intel Xeon Dual-Core CPU and Hitachi custom ASIC for I/O processing.

Memory

|

Description

|

Size in GB

|

Number of Instances

|

Total GB

|

Nonvolatile

|

|

Server Main Memory

|

16

|

2

|

32

|

V

|

|

Server Filesystem and Storage Cache

|

26

|

2

|

52

|

V

|

|

Server Battery-backed NVRAM

|

4

|

2

|

8

|

NV

|

|

Cache Module

|

8

|

4

|

32

|

NV

|

|

Grand Total Memory Gigabytes

|

|

|

124

|

|

Memory Notes

Each 4060 node has 16GB of main memory that is used for the operating system and in support of the FPGA functions. 26GB of memory is dedicated to filesystem metadata, sector cache and for other purposes. A separate, integrated battery-backed NVRAM module (4GB) on the filesystem board is used to provide stable storage for writes that have not yet been written to disk. The HUS storage system was configured with 32GB Memory.

Stable Storage

The Hitachi NAS Platform node writes to the battery based (72 hours) NVRAM internal to the Server first. The data from NVRAM is then written to the backend storage system at the earliest opportunity, but always within a few seconds of arrival in the NVRAM. In an active-active cluster configuration, the contents of the NVRAM are synchronously mirrored to ensure that in the event of a single node failover, any pending transactions can be completed by the remaining node. The data from the node is then written onto the battery backed backend storage system cache (a second layer of NVRAM in the entire solution) and are backed up onto the Cache Flash Memory modules in the event of a power outage. The Cache Flash Memory modules in the backend storage system are part of the total solution, but is used only during power outage and not used as cache space.

System Under Test Configuration Notes

The system under test consisted of two Hitachi Unified Storage file module 4060 nodes, connected to a HUS150 storage system via two Brocade 5320 FC switches. The nodes are configured in an active-active cluster mode, directly connected by a redundant pair of 10GbE connections to the cluster interconnect ports. The HUS150 storage system consisted of 300 10K RPM SAS drives. All the connectivity from server to the storage was via two 8Gbps switched FC fabric. For these tests, there were 2 zones created on each FC switch. Each 4060 server was connected to each zone via 2 integrated 8Gbps FC ports (corresponding to 2 H-ports). The HUS150 storage system was connected to the 2 zones (corresponding to 8 FC ports) providing I/O path from the server to storage. The System Management Unit (SMU) is part of the total system solution, but is used for management purposes only and was not active during the test.

Other System Notes

Test Environment Bill of Materials

|

Item No

|

Qty

|

Vendor

|

Model/Name

|

Description

|

|

1

|

16

|

Oracle

|

Sun Fire x2200

|

RHEL 5 clients, two Dual core processors, 8GB RAM

|

|

2

|

1

|

Brocade

|

TurboIron

|

Brocade TurboIron 24X, 24 port 10GbE Switch

|

Load Generators

|

LG Type Name

|

LG1

|

|

BOM Item #

|

1

|

|

Processor Name

|

AMD Opteron

|

|

Processor Speed

|

2.6 GHz

|

|

Number of Processors (chips)

|

2

|

|

Number of Cores/Chip

|

2

|

|

Memory Size

|

8 GB

|

|

Operating System

|

Red Hat Enterprise Linux 5, 2.6.18-8.e15 kernel

|

|

Network Type

|

1 x Intel XF SR PCIe 10GbE

|

Load Generator (LG) Configuration

Benchmark Parameters

|

Network Attached Storage Type

|

NFS V3

|

|

Number of Load Generators

|

16

|

|

Number of Processes per LG

|

56

|

|

Biod Max Read Setting

|

2

|

|

Biod Max Write Setting

|

2

|

|

Block Size

|

64

|

Testbed Configuration

|

LG No

|

LG Type

|

Network

|

Target Filesystems

|

Notes

|

|

78..93

|

LG1

|

1

|

/w/d0, /w/d1, /w/d2, /w/d3

|

None

|

Load Generator Configuration Notes

All clients were connected to one common namespace on the server cluster, connected to a single 10GbE network.

Uniform Access Rule Compliance

Each load generating client hosted 56 processes, all accessing a single namespace on the 4060 cluster through a common network connection. There were 4 target file systems (/w/d0, /w/d1, /w/d2, /w/d3) that are presented as a single cluster namespace through virtual root (/w), accessible to all clients. Each load generator was mounted to each filesystem target (/w/d0, /w/d1, /w/d2, /w/d3) and cycled through all the file systems in sequence.

Other Notes

Hitachi Unified Storage, Hitachi Unified Storage VM, Hitachi NAS Platform and Virtual Storage Platform are registered trademarks of Hitachi Data Systems, Inc. in the United States, other countries, or both. All other trademarks belong to their respective owners and should be treated as such.

Config Diagrams

Generated on Tue Jul 23 17:53:53 2013 by SPECsfs2008 HTML Formatter

Copyright © 1997-2008 Standard Performance Evaluation Corporation

First published at SPEC.org on 23-Jul-2013